The world of software development is constantly evolving, and one concept that stands out in this fast-paced landscape is concurrent I/O. As applications demand more efficiency and responsiveness, understanding how to manage input/output operations concurrently becomes essential. Whether you’re building a web server, working with databases, or developing interactive user interfaces, mastering non-blocking I/O can dramatically enhance your application’s performance.

Imagine your application handling multiple tasks simultaneously without waiting for each operation to complete—this is the power of concurrent I/O. In this blog post, we will explore various facets of concurrency and delve into its significance in modern programming practices. Get ready for an enlightening journey through the intricate mechanics behind non-blocking operations!

Concurrent Code Reputation

Concurrent code reputation refers to the ability of a system to handle multiple tasks simultaneously, enhancing performance and responsiveness. As applications grow in complexity, developers must prioritize efficient resource management, ensuring that no operation blocks others from executing.

This efficiency is crucial in environments where latency matters. By leveraging concurrent I/O strategies, programmers can create robust systems capable of scaling effectively while maintaining high throughput. The ongoing evolution of technology only amplifies the need for mastering this essential skill set.

Introduction to Concurrency and Parallelism

Concurrency and parallelism are core concepts in computer science that often lead to confusion. Concurrency refers to the ability of a system to handle multiple tasks at once, making it seem like they’re occurring simultaneously. It’s about structuring code so that various operations can be interleaved.

On the other hand, parallelism is all about executing multiple tasks at the same time across different processors or cores. While both aim for improved efficiency, their approaches vary significantly in implementation and outcome.

Processes versus Threads

Processes are standalone programs that operate independently. Each process has its own memory space and resources, making them robust against crashes in other processes. This isolation comes with overhead, as starting a new process is resource-intensive.

Threads, on the other hand, exist within a process. They share the same memory space and can communicate easily. While this makes threads lighter and faster to create than processes, it also increases complexity due to potential data corruption from concurrent access.

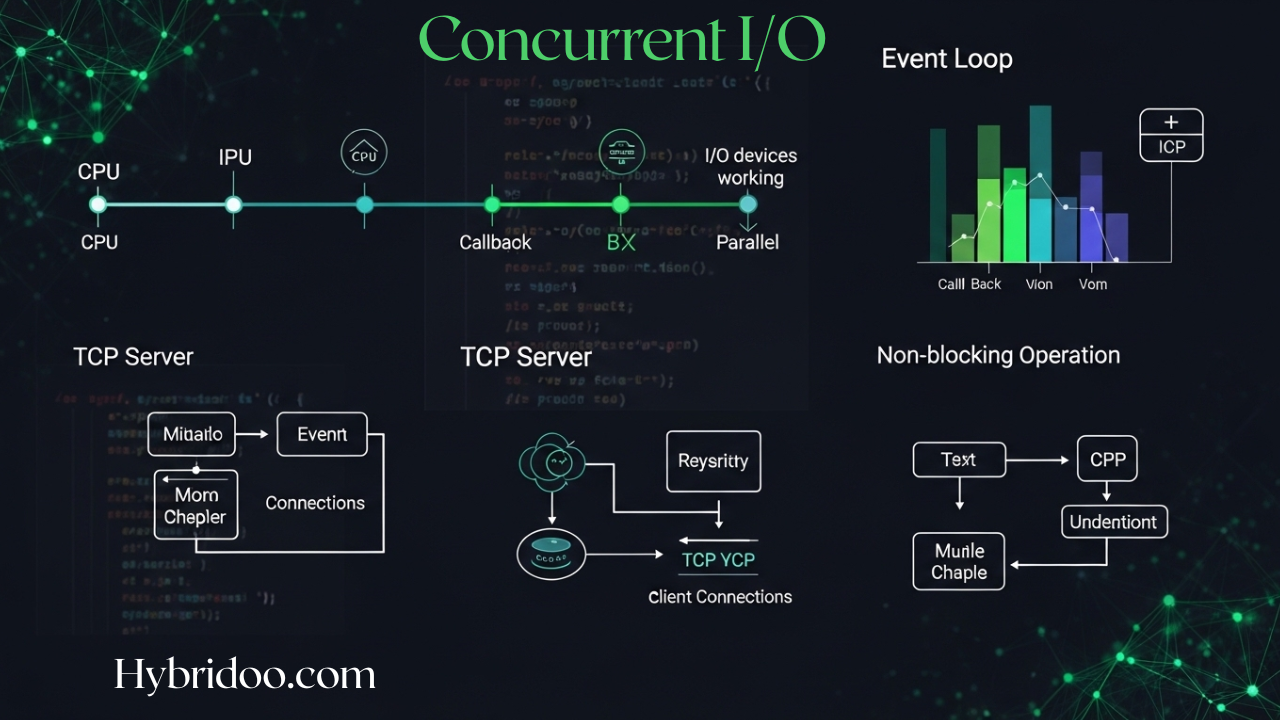

CPU versus I/O

The CPU and I/O operations serve different purposes within a computer system. The CPU, or central processing unit, is responsible for executing instructions, performing calculations, and managing tasks. It operates at incredible speeds but can become bottlenecked when waiting for data from slower devices.

On the other hand, I/O refers to input/output processes that handle communication between the computer and external devices like disks or networks. These operations often take longer than CPU tasks, leading to inefficiencies if not managed properly in concurrent scenarios.

Blocking versus Non-Blocking I/O

Blocking I/O waits for operations to complete before moving on. This means if a read operation is in progress, the entire program halts until that data is retrieved. Such behavior can lead to inefficiencies, especially when handling multiple tasks.

Non-blocking I/O allows other processes or threads to continue executing while waiting for operations to finish. It enhances performance by utilizing system resources more effectively and reduces idle time, making it particularly useful in high-concurrency environments like web servers.

Modern Web Servers’ Adoption of Async Non-Blocking Model

Modern web servers are increasingly adopting the asynchronous non-blocking model to enhance performance. This approach allows them to handle multiple requests simultaneously without getting bogged down by any single operation.

By utilizing event-driven architectures, these servers can efficiently manage I/O operations. As a result, they offer faster response times and improved scalability. Technologies like Node.js exemplify this trend, allowing developers to build high-performance applications that effectively leverage concurrent I/O capabilities.

Busy-Waiting, Polling, and the Event Loop

Busy-waiting occurs when a process continuously checks for a condition to be met, consuming CPU cycles unnecessarily. This method can lead to inefficiency, especially in systems where resources are limited.

Polling is another approach where the program regularly checks the status of an I/O operation at set intervals. While slightly more efficient than busy-waiting, it still wastes valuable processing time. The event loop offers a smarter alternative by managing events and tasks asynchronously, allowing other operations to proceed while waiting for I/O completion.

TCP Server Implementation

Implementing a TCP server involves creating a listening socket that waits for client connections. Once a connection is established, the server can handle multiple clients using non-blocking I/O operations, which allows it to process requests without stalling other ongoing tasks.

This model enhances efficiency by managing concurrent I/O through event-driven architecture. Instead of blocking while waiting for data, the server can continue executing other operations, ensuring responsiveness and better resource utilization across all connected clients.

Future Directions: Concurrency Patterns, Futures, Promises, and More

The future of concurrent I/O is exciting, with emerging concurrency patterns paving the way for more efficient applications. Developers are increasingly leaning on concepts like futures and promises to simplify complex asynchronous workflows.

These tools empower programmers to manage tasks without blocking, enhancing responsiveness in software. As technologies evolve, the integration of these patterns will likely redefine how we approach scalability and performance in programming environments. The potential for innovation is immense as new strategies continue to emerge.

FAQs

Understanding concurrent I/O can raise several questions. Many people wonder what exactly io concurrency entails and how it affects system performance.

Another common inquiry is about the relationship between CPU tasks and I/O operations. Can they truly operate at the same time? It’s essential to grasp these concepts for effective programming, especially when dealing with modern applications that demand efficiency and speed in data handling.

What is io concurrency?

I/O concurrency refers to the ability of a system to handle multiple input/output operations simultaneously. This is crucial in programming, especially for applications that require high performance and responsiveness.

By leveraging concurrent I/O, developers can efficiently manage tasks like reading from or writing to files, databases, or network connections without blocking other processes. This allows systems to remain agile and resourceful while executing multiple operations at once.

What does concurrent do?

Concurrent programming allows multiple tasks to make progress simultaneously. This can occur on a single processor by switching between tasks or across multiple processors, enhancing efficiency.

By utilizing concurrency, programs can handle more operations at once without waiting for each task to complete before starting the next one. It improves responsiveness and resource utilization in applications, especially when dealing with I/O-bound processes like network requests or file systems.

Can IO devices and the CPU execute concurrently?

Yes, I/O devices and the CPU can execute concurrently. This allows for efficient resource utilization, as the CPU can process instructions while waiting for data transfers to complete.

When an I/O operation occurs, the CPU isn’t stalled; it can continue executing other tasks. This concurrency enables smoother multitasking in applications and enhances overall system performance. By leveraging this model, developers create responsive software that handles multiple operations simultaneously without unnecessary delays.

What does “concurrent” mean?

Concurrent refers to the ability of a system to manage multiple tasks at once. It allows processes or threads to run without having to wait for one another. This means that while one task is waiting for a resource, another can be executed instead. In programming, concurrency enables more efficient use of CPU and I/O operations by allowing overlapping execution. Understanding this concept is crucial in optimizing applications and ensuring they run smoothly under various workloads. Embracing concurrent IO approaches leads to better performance and responsiveness in modern computing environments.